Some researchers perceive the root problem to be a weak discriminative network that fails to notice the pattern of omission, while others assign blame to a bad choice of objective function. For example, a GAN trained on the MNIST dataset containing many samples of each digit, might nevertheless timidly omit a subset of the digits from its output. GANs often suffer from a "mode collapse" where they fail to generalize properly, missing entire modes from the input data.

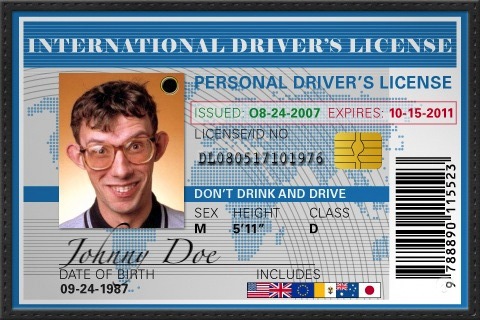

Fake id generator bypass facebook generator#

When used for image generation, the generator is typically a deconvolutional neural network, and the discriminator is a convolutional neural network. Independent backpropagation procedures are applied to both networks so that the generator produces better samples, while the discriminator becomes more skilled at flagging synthetic samples. Thereafter, candidates synthesized by the generator are evaluated by the discriminator. Typically the generator is seeded with randomized input that is sampled from a predefined latent space (e.g. The generator trains based on whether it succeeds in fooling the discriminator. Training it involves presenting it with samples from the training dataset, until it achieves acceptable accuracy. Ī known dataset serves as the initial training data for the discriminator. The generative network's training objective is to increase the error rate of the discriminative network (i.e., "fool" the discriminator network by producing novel candidates that the discriminator thinks are not synthesized (are part of the true data distribution)). Typically, the generative network learns to map from a latent space to a data distribution of interest, while the discriminative network distinguishes candidates produced by the generator from the true data distribution. The contest operates in terms of data distributions. The generative network generates candidates while the discriminative network evaluates them. 2.4 Concerns about malicious applications.This enables the model to learn in an unsupervised manner. This basically means that the generator is not trained to minimize the distance to a specific image, but rather to fool the discriminator. The core idea of a GAN is based on the "indirect" training through the discriminator, which itself is also being updated dynamically.

Though originally proposed as a form of generative model for unsupervised learning, GANs have also proved useful for semi-supervised learning, fully supervised learning, and reinforcement learning. For example, a GAN trained on photographs can generate new photographs that look at least superficially authentic to human observers, having many realistic characteristics.

Given a training set, this technique learns to generate new data with the same statistics as the training set. Two neural networks contest with each other in a game (in the form of a zero-sum game, where one agent's gain is another agent's loss). A generative adversarial network ( GAN) is a class of machine learning frameworks designed by Ian Goodfellow and his colleagues in June 2014.